Commands Game Boy CPU Manual The tile map consists of a tile number byte & a tile attribute byte at each position on the map. A total of 32 lines are downloaded even though the last 4 lines are not visible. This would equal 64 bytes per line and a total of 2048 bytes per map.

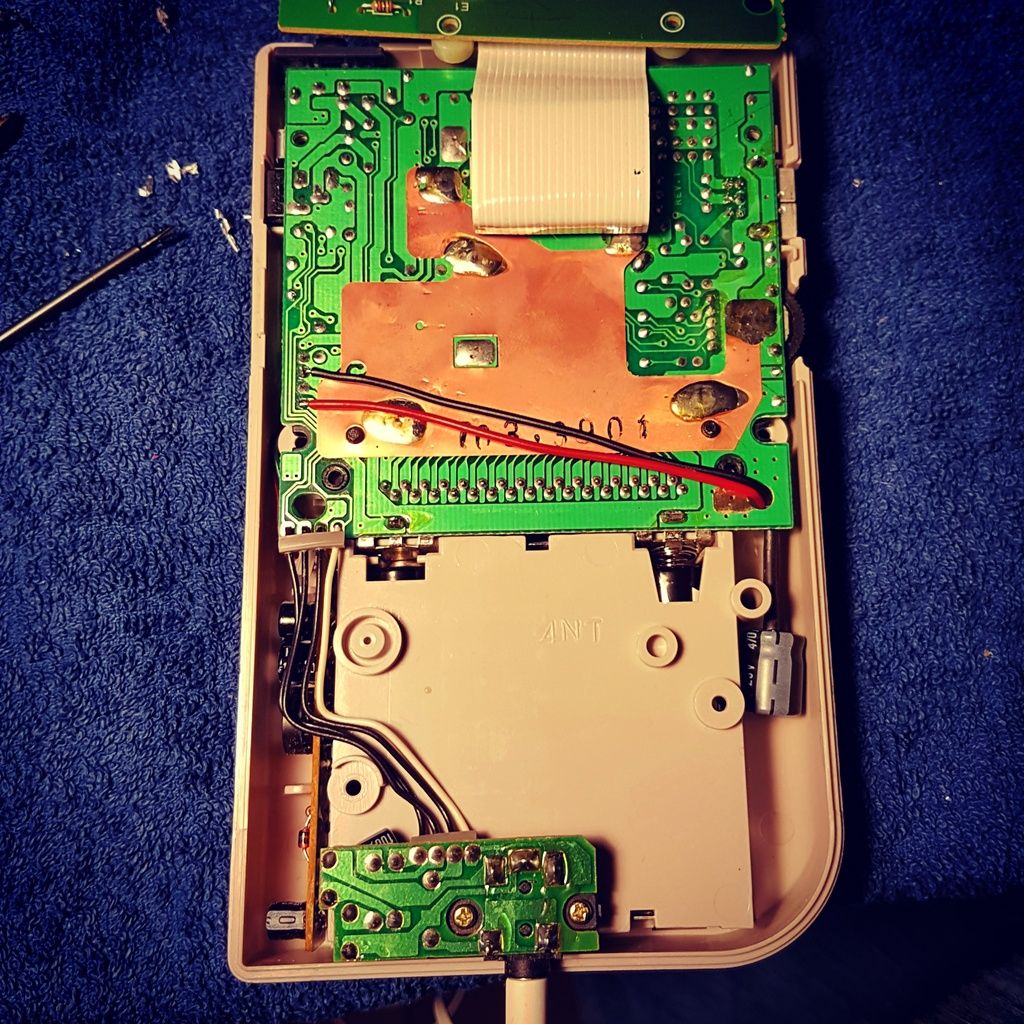

The Game Boy is an 8-bit handheld video game device developed and manufactured by Nintendo. It was released in Japan on April 21, 1989, in North America on July 31, 1989, and in Europe on September 28, 1990. It is the first handheld console in the Game Boy line, and was created by Gunpei Yokoi and Nintendo Research & Development 1—the same staff who had designed the Game & Watch series as well as several popular games for the Nintendo Entertainment System. The Game Boy was a tremendous success. The Game Boy and its successor, the Game Boy Color have both combined sold 118.69 million units worldwide. Upon its release in the United States, it sold its entire shipment of one million units within a few weeks. See what we have made out of this iconic hand-held.

Alright, let's get this straight. I saw on the official gameboy documents that the cpu core inside of the gameboy is clocked at 1.05 mhz and they say that that's the 'system operating frequency'.

But what makes me question this is that someone else stated that Nintendo was only measuring in machine cycles other than clock cycles, which makes no sense because another dev stated they worked on tomb raider for the gbc and that the internal cpu inside the soc is in fact clocked at 1.05mhz he also stated some kind of method on verifying the clock speed. What I believe is that the gameboy cpu (soc with all of the internals such as ppu the audio mixer etc.) Has a master clock speed of 4.1943mhz, and the cpu core within the soc is clocked at 1.05mhz.

I believe this because systems such as the game gear have a master crystal clocked at something like 50mhz or something and that is sure let not the clock rate of the cpu. That is the master clock for all of the logic chips, and if your talking about the 1chip version that will be the master clock for the soc. So, evidence leads me towards believing that the 4.1943mhz clock is the master clock rate of the gb soc, not the cpu. Please correct me if your sure I'm wrong, or give some input on this topic.

The only people who will know for sure what's going on inside that chip are the engineers that designed it. Having said that, the machine cycles on a Z80 were always about 4 clock cycles long (on average - the original Z80 had somewhat variable timing and M1 could be 4-6 T states long, other cycles were 3-5 T states long).It seems that the chip used in the Gameboy has fixed instruction timing and every machine cycle always takes exactly 4 T states, which would explain where that 1.05MHz came from with a 4.1943MHz clock. But I really think that the cpu core clock speed is 1.05mhz because when people overcook the 4.1943mhz clock, the whole system speeds up, like if you overclocked the whole soc, not just the cpu. And I know that's not how gameboy games run at a higher clock speed because you are able to play dmg games in cgb mode in bgb and it doubles the cpu speed like in real cgb mode, but it doesn't make the game unplayabley fast, it just acts as if you overclocked the real cpu (reducing lag and slowdowns and such). So I really think that 4.1943mhz is just the clock speed for the whole soc other than the clock speed of the cpu core. Calling the DMG processor a 'soc' is stretching the definition of that term past breaking point IMO. It's just a CPU with some specialized on-chip peripherals - using the definition you seem to be using, the chip that controls my microwave would be a soc too, since it has the ability to generate tones and drives an LCD display.

Same thing goes for the CPU in the NES (it has integrated audio hardware) - but nobody would consider those socs.Saying that the same clock controls everything is the same as saying it only has a single clock input - and that's pretty obvious just looking at the schematic diagram. It also implies that the on-chip peripherals are all running synchronous to the CPU - which is actually a strong argument against it being a soc design, since they typically use internal buses with synchronization and arbitration logic so the different modules can run in different clock domains.But going back to the original question, the basic reason I think the CPU clock really is 4MHz is simply that it's an 8080/Z80 architecture processor, and they have always required multiple clocks per machine cycle.

Certainly you could (at the cost of substantial additional hardware) design a 1T Z80 core - but doing that and then sticking a /4 clock prescaler on it would be utterly senseless. Basically, it would fall into the category of something no rational engineer would do, so it almost certainly hasn't been done. Here is a post on wikipedia from a dev that worked on tomb raider for the gbc: 'I wrote the first Tombraider game on the GBC & my engine was used by the second game. The CPU is clocked at 1.05MHz but can be switched to run at 2.1MHz. The DMA of the sprites & BG meant the game ran at 30Hz. Switching back to 1.05MHz and dispatching a HALT intruction was tested and proved to increase battery-life.

The clock was used by the video core & the CPU but was divided for the latter. I can assure you that it didn't run at 8MHz. If it did, I would have rendered the 3D model into an array of OBJs'. — Preceding comment added by 21:44, 17 July 2017 (UTC). So I guess since you guys are saying that the gameboy has a crystal clocked at 4.1943mhz and you say that that is the cpu speed, then I guess since the gba's cpu is clocked at 4mhz since the crystal is infact a 4mhz one (Yes I know the cpu core is really clocked at 16mhz).

I know you guys are saying that the 1.05 mhz is machine cycles, but I still think that that is just an misconception or a coincident, and that it's really the the cpu core clock speed. This is because like I said, if you overclock the gb cpu, the game will physically speed up, and the same goes for if you overclock the gba' s master clock (which is 4mhz for the soc, and the cpu core is clocked at 16mhz) the game will speed up, not because of the cpu is being overclocked, it's because the ic is being overclocked.

If you even overclock the ds cpu, the games will speed up, this is not because the games wheren't made to run at that frequency, its because of the same reason as above. You guys do know when you overclock a systems cpu, (like genesis) it will improve performance, not speed it up, right? The only games that speed when you overclock it are the old dos games, (which were software rendered by the way) which the gameboy is not. And like I said you can run an original gb game in gbc mode through bgb and the results of the increased clock frequency of the gbc are the games running at a smoother speed during the Times the game would normaly slow down. But if you overclock the gb 4mhz crystal to 8, the game will speed up and become unplayable.

You are thinking of this like a pc cpu, where overclocking gives the cpu more available cycles but the software doesn't actually have to use them.This is not how it works, you are making the instructions happen faster, the game speeds up when you over clock. The cycles just happened faster and everything is sped up. Pc software used to work like this too a long time ago.Changing the clock does speed up or slow down the gameboy. This is why I sell the crystal to replace the super gameboy crystal to give the correct clock and have games play at the correct speed. This is well documented.I'm really not sure what point you are trying to make anymore. Click to expand.No, what we are saying is that 8080/Z80 architecture CPUs are typically clocked at about 4 CPU clocks per machine cycle, so the fact that the one that's embedded into the DMG seems to take exactly 4 clocks per machine cycle is extremely unlikely to be a coincidence.

The suggestion that you're making (that it really executes one instruction per cycle, but prescales the clock by 4) doesn't make any sense for a couple of reasons - the first is that the ROM access time is consistent with a 4MHz clock and not a 1MHz one and the second I've already mentioned - if you designed a 1T core then you would just run it at the full clock speed and get 4x the performance - making it 1T and then dividing the clock by 4 would be an utterly incomprehensible design decision that would greatly increase the complexity of the logic and the die size for absolutely no benefit. I know you guys are saying that the 1.05 mhz is machine cycles, but I still think that that is just an misconception or a coincident, and that it's really the the cpu core clock speed.

This is because like I said, if you overclock the gb cpu, the game will physically speed up, and the same goes for if you overclock the gba' s master clock (which is 4mhz for the soc, and the cpu core is clocked at 16mhz) the game will speed up, not because of the cpu is being overclocked, it's because the ic is being overclocked. If you even overclock the ds cpu, the games will speed up, this is not because the games wheren't made to run at that frequency, its because of the same reason as above. You guys do know when you overclock a systems cpu, (like genesis) it will improve performance, not speed it up, right? The only games that speed when you overclock it are the old dos games, (which were software rendered by the way) which the gameboy is not. And like I said you can run an original gb game in gbc mode through bgb and the results of the increased clock frequency of the gbc are the games running at a smoother speed during the Times the game would normaly slow down.

But if you overclock the gb 4mhz crystal to 8, the game will speed up and become unplayable. Click to expand.This is just 'it's all in one clock domain', which there is no dispute about. It's also entirely tangential to the basic claim you are making, since the real issue is that the whole chip is clocked from a single source and that would be the case no matter if it were implemented using a 4T CPU with a /1 clock or a 1T CPU with a /4 clock. There is still only one physical clock pin on the package, and hence all the timing must derived from it, since there is no other option.If the question you're really asking is 'can you overclock the CPU in the DMG without affecting the video and audio timing' then I'm pretty sure the answer is no - but it would be 'no' even if the clock were derived in the manner you are assuming.

The way the clocking works in the AGB is not terribly relevant since it's a later system that was designed at the point when putting PLL clock multipliers on-chip and using a lower frequency (and hence more mechanically robust) xtal had become standard practice - but it wasn't back when the DMG came out in '89.Guys, I found some info on the official gameboy color programming manual, and it states this: 'The speed of the CGB CPU can be changed to suit different purposes. In normal mode, each blockoperates at the same speed as with the DMG CPU. In double-speed mode, all blocks except the liquidcrystal control circuit and the sound circuit operate at twice normal speed.Normal mode: 1.05 MHz (CPU system clock)Double-speed mode: 2.10 MHz (CPU system clock)' So this means that when you overclock the main crystal to a higher speed by replacing the crystal, you overclock the lcd and sound cores as well, thus, making it speed up the gameplay. So if we can have access to which core in the cpu to overclock, we can hypothetically improve slowdowns in gameplay even farther than the double speed improvement of the gbc!

Also, why is it that Nintendo chose to measure the clock rate in machine cycles rather than clock cycles? This must have caused a lot of confusion with some developers. Yeah, it sounds like they have a circuit in there that allows you to switch to either a full-speed clock (for CGB native code) or a /2 clock (for DMG compatibility).I suspect the fact they were talking in machine cycles is simply that the earlier Nintendo machines like the Famicom used a 6502 based core, and the 6502 is a 1T CPU, so the developers were used to thinking in those terms. Sure, they could have said (and arguably more accurately) 'The CPU is clocked at 4.19MHz, but takes 4 clock cycles per instruction giving an effective machine cycle rate of 1.05MHz' - but a developer doesn't need to know that, they just need to know how fast the CPU is running from a software perspective. Click to expand.When I was writing my own Gameboy emulator this did cause some confusion for me. It was mainly because I was coming from the point of view of the NES and the 6502 based CPU.

So I had some issues because of the different timing terms so I think at first I was emulating the CPU as though it were significantly slower actually, because of the relation between CPU time and PPU time I had wrong. But if you were going from the point of view of a developer for Gameboy/Gameboy Color games I don't see how this would be too terribly confusing for them. Double speed mode is pretty clear.

It also doesn't really matter the exact specifics of if the CPU is running at 4mhz in one terminology or 1mhz in another. You'd develop your game and test it on the hardware.

Now if you were looking to do very tricky timing things like on the NES then yes timing is very important. But if I'm remembering right the Gameboy addressed some of those things making them not as tricky to do. Also I would imagine that most Gameboy games were programmed by people that had already programmed previous Gameboy games and if they had not then they atleast had a background developing software of some sort prior. It's not like they couldn't figure out what they needed to know to get things to work. When I was writing my own Gameboy emulator this did cause some confusion for me. It was mainly because I was coming from the point of view of the NES and the 6502 based CPU. So I had some issues because of the different timing terms so I think at first I was emulating the CPU as though it were significantly slower actually, because of the relation between CPU time and PPU time I had wrong.

But if you were going from the point of view of a developer for Gameboy/Gameboy Color games I don't see how this would be too terribly confusing for them. Double speed mode is pretty clear. It also doesn't really matter the exact specifics of if the CPU is running at 4mhz in one terminology or 1mhz in another.

You'd develop your game and test it on the hardware. Now if you were looking to do very tricky timing things like on the NES then yes timing is very important. But if I'm remembering right the Gameboy addressed some of those things making them not as tricky to do. Also I would imagine that most Gameboy games were programmed by people that had already programmed previous Gameboy games and if they had not then they atleast had a background developing software of some sort prior.

It's not like they couldn't figure out what they needed to know to get things to work. Click to expand.Yeah, the timing on the DMG CPU is a lot simpler than either the 8080 or the Z80 - on both of those, different types of machine cycles were different numbers of clocks long and varied based on instruction.

For example, on the Z80 the M1 cycle was 4-6 clocks (assuming no wait states) and the subsequent cycles were 3-5 clocks. The DMG CPU always has machine cycles that are exactly 4 clocks long, and I suspect this is the reason that it doesn't implement most of the Z80 architectural extensions.